Introduction

Device sensors provide real-time insights into what persons are doing (walking, running, driving, etc.).Knowing users’ activity allows, for instance, to interact with them through an app.

You can apply machine learning to detect activities by reading and processing sensor data in this regard.

Healthcare professionals can test this methodology by downloading Neural Designer

Download

Contents

1. Objectives

2. Benefits

3. Approach

4. Conclusions

1. Objectives

Human Activity Recognition (HAR) classifies a person’s actions from sensor measurements.

With the rise of the Internet of Things, devices like smartwatches, heart rate monitors, or smartphones easily capture this data.

Feature extraction is usually performed with a fixed-length sliding window, requiring parameters such as window size and shift.

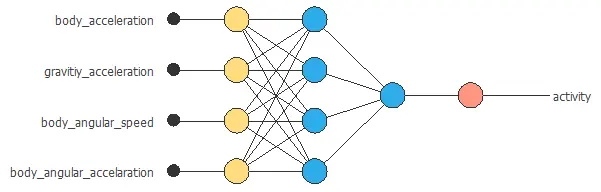

- Body acceleration.

- Gravity acceleration.

- Body angular speed.

- Body angular acceleration.

- Etc.

The machine learning model used for activity recognition relies on top of the devices’ available sensors.

However, analyzing this data can be a big challenge. Indeed, human activities are complex, and there are differences between individuals.

2. Benefits

2.1. Monitor health

Analyze a person’s activity by carefully processing the information collected from various wearable devices and sensors to continuously monitor their health.

2.2. Discover activity patterns

Identify the key variables and patterns from the collected data that accurately determine which activity a person is performing.

2.3. Detect activity

Build a predictive model that can accurately recognize a person’s activity based on the signals received from multiple wearable devices and sensors over time.

2.3. Improve wellbeing

Use the analysis to design personalized exercise plans specifically aimed at improving the overall health and wellbeing of the individual.

3. Approach

Neural networks are the perfect algorithms to determine a person’s physical activity. This is due to their ability to recognize the patterns behind the data.

The following graph illustrates a neural network that classifies different activities using smartphone data.

4. Conclusions

Human activity recognition has a wide range of uses because of its impact on wellbeing.

It is becoming a fundamental tool in healthcare solutions such as preventing obesity or caring for elderly persons.

Data sets

Further reading

- Deep learning for sensor-based activity recognition: A survey.

- Chernbumroong, S., Cang, S., Atkins, A., & Yu, H. (2013). Elderly activities recognition and classification for applications in assisted living. Expert Systems with Applications, 40(5), 1662-1674.

- Anguita, D., Ghio, A., Oneto, L., Parra, X., & Reyes-Ortiz, J. L. (2013, April). A public domain dataset for human activity recognition using smartphones. In Esann.

- Mannini, A., & Sabatini, A. M. (2010). Machine learning methods for classifying human physical activity from on-body accelerometers. Sensors, 10(2), 1154-1175.