Text classification is a machine learning technique that assigns predefined categories to open text.

Contents

Introduction

The categorization or classification of information is one of the most widely used branches of Text Mining. The premise of classification techniques is simple: starting from a set of data with an assigned category or label, the objective is to build a system to identify the existing documents’ patterns for determining their class. During the construction of the artificial intelligence model, the aim is to minimize the error between the categories predicted by the system and the real ones previously assigned.

Some examples of applications for text classification are spam detection, sentiment analysis, hate speech detection, and fake news detection.

Depending on the number of document classes, text classification problems can be:

- Binary: here, the model must identify whether a document belongs to a class. An example of this problem is spam detection, where we must determine whether an email is spam.

- Multiple: in this problem, each document must be assigned a class from a list of different ones. An example is sentiment analysis: emotions (happiness, sadness, joy,…) are the classes for this problem.

It is essential to highlight the difficulty of the data labeling process. Generally, experts in the field of application carry out this process manually.

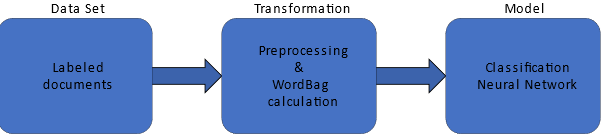

This image resumes the text classification training process.

We can divide the text classification process into the following steps:

- Data processing and transformation

- Model training

- Testing analysis

1. Data processing and transformation

The transformation process in a classification problem comprises two stages: normalization and numerical representation.

Normalization

Sometimes, in classification problems, the computational cost is very high, and reducing the number of input variables helps obtain better results faster. For this purpose, document normalization is generally applied. This process consists of using some of the following techniques for reducing the number of input words:

- Lowercase transformation: for example, “LoWerCaSE” is transformed into “lowercase.”

- Punctuation signs and special characters removal: punctuation signs and special characters like “;”,” #”, or “=” are removed.

- Stop words elimination: Stop words are commonly used words in any language that don’t provide any information for our model. For example, some stop words in the English language are “myself”, “can”, and “under”.

- Short and long word deletion: short words are eliminated because they do not provide much information, for example, the word “he”. On the other hand, long words are eliminated because of their low frequency in the documents.

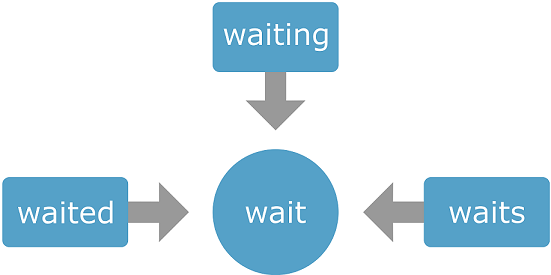

- Stemming: Every word is composed of a root, a lemma (or lexeme), the part of the word that does not vary and indicates its central meaning, and a morpheme, particles that are added to the root to form new words.

The stemming technique replaces each word with its lemma to obtain fewer input words.

Once we have processed and normalized the documents, we must transform them into a numerical format that neural networks can process.

Numerical representation

The intuition behind this idea lies in representing documents as vectors in an n-dimensional vector space. Therefore, the neural network can interpret and utilize these vectors to perform various tasks. Among the simplest traditional text representation techniques is a Bag of Words.

Bag of Words

Bag-of-Words (BoW) consists of constructing a dictionary for the working dataset and representing each document as a count of the words in it. This type of representation represents the document with a vector length equal to the number of words in the dictionary. Each vector element denotes the frequency of each token’s usage in the document.

| water | steak | want | don’t | the | some | and | I | |

|---|---|---|---|---|---|---|---|---|

| “I want some water.” | 1 | 0 | 1 | 0 | 0 | 1 | 0 | 1 |

| “I want the steak.” | 0 | 1 | 1 | 0 | 1 | 0 | 0 | 1 |

| “I want steak, and I want water.” | 1 | 1 | 1 | 0 | 0 | 0 | 1 | 1 |

| “I don’t want water.” | 1 | 0 | 1 | 1 | 0 | 0 | 0 | 1 |

We call this method “bag of words” since it does not represent the order of the words. It is also important to note that if new documents were introduced whose words are not present in the vocabulary, they could be transformed by omitting the unknown words.

The BoW model has several drawbacks in its use. One of the most relevant is that when the corpus size is considerable, the vocabulary size is consequently increased. Therefore, very sparse vector sets are obtained, with many zeros and large sizes, which implies a higher memory consumption.

2. Model training

Once the document’s numerical representation has been obtained, we can start model training using a classification neural network. A classification neural network usually requires a scaling layer, one or several perceptron layers, and a probabilistic layer.

3. Testing analysis

As with any classification problem, model evaluation is essential. However, in text classification problems, evaluation measures are not absolute, as they depend on the specific classification task: classifying medical texts is not the same as classifying whether a review is positive or negative. Therefore, the most usual thing to do is to look in the literature for baselines for similar tasks and compare with them to see if we are getting acceptable results.

As with a traditional classification task, the most used metrics are:

Confusion matrix

In the confusion matrix, the rows represent the target classes in the data set, and the columns represent the predicted output classes from the neural network.

The following table represents the confusion matrix:

| Predit class 1 | … | Predit class N | |

|---|---|---|---|

| Red class 1 | # | # | # |

| … | # | # | # |

| Red class N | # | # | # |

div style=”max-width: 100%; overflow-x:auto”>

$$ precision = \frac{\#\ true\ positives}{\#\ true\ positives + \#\ false\ positives}$$

In addition, we must move between the line of overfitting and underfitting to arrive at a quality classifier.

An under-fitted model has low variance, which means that whenever the same data is introduced, the same prediction is obtained, but its prediction is too far from reality. This phenomenon occurs when the model has insufficient training data to find the existing patterns in the data.

Alternatively, achieving the optimal operational point involves evaluating the model with data that hasn’t undergone training and is entirely new. For this reason, it is advisable to subdivide the corpus into multiple subsets (training, testing, and selection).

Conclusions

Text classification stands as one of the most extensively utilized Text Mining techniques today, finding applications that span from categorizing reviews into positive and negative sentiments to organizing support messages based on urgency levels.

This article has seen the different stages of text classification problems: data processing and transformation, model training, and testing analysis. Following these steps, we can build the best text classification models.