The main goal is to design a classification machine learning model that classifies different star types.

The categories are Red Dwarf, Brown Dwarf, White Dwarf, Main Sequence, Supergiants, or Hypergiants.

The classification is given according to luminosity, radius, color, and other star characteristics.

Contents

1. Application type

This is a classification project. Indeed, the variable to be predicted is categorical.

These variables are detailed in the following section.

2. Data set

The first step is to prepare the data set. This is the source of information for the classification problem.

For that, we need to configure the following concepts:

- Data source.

- Variables.

- Instances.

Data source

The data source is the CSV file Stars.csv.

The number of columns is 7, and the number of rows is 240.

Variables

The variables are:

Physical Properties

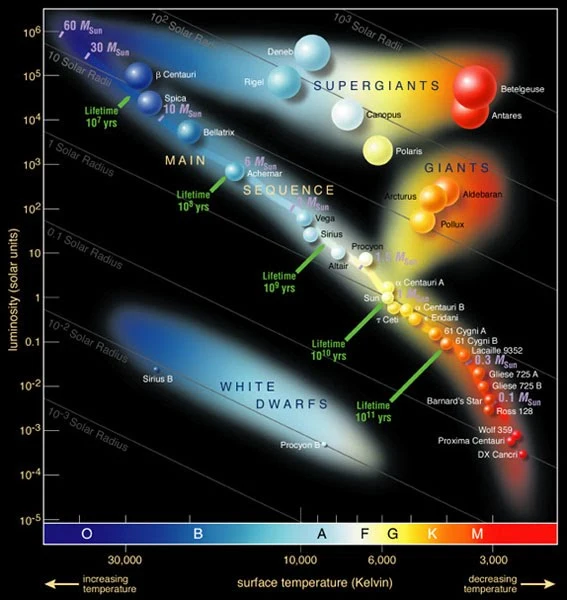

- Temperature: Surface temperature of the star, measured in Kelvin (K).

- Luminosity: Brightness of the star relative to the Sun (L/L0L/L_0).

- Relative radius: Radius of the star compared to the Sun (R/R0R/R_0).

- Absolute magnitude: Intrinsic brightness of the star as seen from a standard distance.

Spectral Properties

- Color: General color of the star’s spectrum (e.g., blue, white, yellow, red).

- Spectral class: Classification by spectral type (O, B, A, F, G, K, M).

Target Variable

Star type: Stellar category (red dwarf, brown dwarf, white dwarf, main sequence, supergiant, hypergiant).

- Red Dwarf: 1 0 0 0 0 0.

- Brown Dwarf: 0 1 0 0 0 0.

- White Dwarf: 0 0 1 0 0 0.

- Main Sequence: 0 0 0 1 0 0.

- Supergiants: 0 0 0 0 1 0.

- Hypergiants: 0 0 0 0 0 1.

Instances

Variables distributions

We can calculate the distributions of all variables.

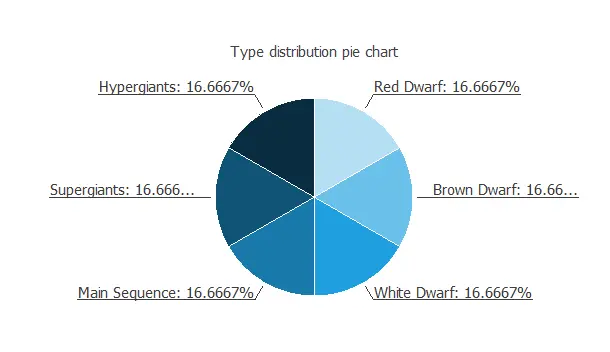

The following figure is the pie chart for the star types.

As we can see, the target is well-distributed.

3. Neural network

The second step is to choose a neural network.

For classification problems, it is usually composed of:

- A scaling layer.

- A perceptron layer.

- A probabilistic layer.

The scaling layer contains the statistics on the inputs calculated from the data file and the method for scaling the input variables.

The number of perceptron layers is 1. This perceptron layer has 22 inputs and 6 neurons.

The probabilistic layer allows the outputs to be interpreted as probabilities. This means that all the outputs are between 0 and 1, and their sum is 1.

The softmax probabilistic method is used here. The neural network has six outputs since the target variable contains six classes (Red Dwarf, Brown Dwarf, White Dwarf, Main Sequence, Supergiants, and Hypergiants).

4. Training strategy

The fourth step is to set the training strategy.

It is composed of:

- Loss index.

- Optimization algorithm.

Loss index

The loss index chosen for this application is the normalized squared error with L1 regularization.

The error term fits the neural network to the training instances of the data set.

The regularization term makes the model more stable and improves generalization.

Optimization algorithm

The optimization algorithm searches for the neural network parameters that minimize the loss index.

The quasi-Newton method is chosen here.

Training

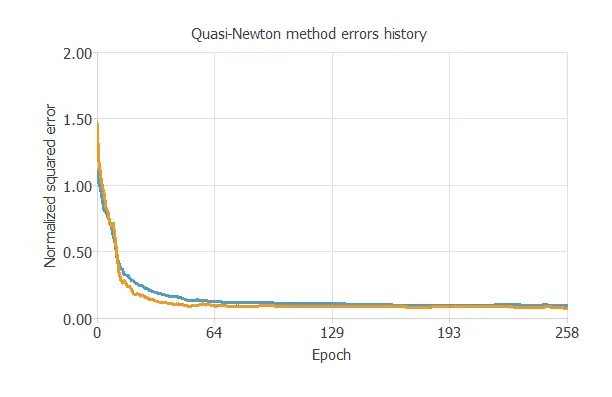

The following chart shows how training and selection errors decrease with the epochs during training.

The final values are training error = 0.091 NSE (blue) and selection error = 0.073 NSE (orange).

5. Model selection

The objective of model selection is to find the network architecture with the best generalization properties.

That is the model that minimizes the error on the selected instances of the data set.

Order selection algorithms train several network architectures with a different number of neurons and select the one with the smallest selection error.

The incremental order method starts with a few neurons and increases the complexity at each iteration.

6. Testing analysis

The purpose of the testing analysis is to validate the generalization performance of the model.

Here, we compare the neural network outputs to the corresponding targets in the testing instances of the data set.

Confusion matrix

In the confusion matrix, the rows represent the targets (or real values), and the columns represent the corresponding outputs (or predicted values).

The diagonal cells show the correctly classified cases, and the off-diagonal cells show the misclassified cases.

| Predicted Red Dwarf | Predicted Brown Dwarf | Predicted White Dwarf | Predicted Main Sequence | Predicted Supergiants | Predicted Hypergiants | |

|---|---|---|---|---|---|---|

| Real Red Dwarf | 7(14.6%) | 0 | 0 | 0 | 0 | 0 |

| Real Brown Dwarf | 0 | 10 (20.8%) | 0 | 0 | 0 | 0 |

| Real White Dwarf | 0 | 0 | 7 (14.6%) | 0 | 0 | 0 |

| Real Main Sequence | 0 | 0 | 0 | 10 (20.8%) | 0 | 0 |

| Real Supergiants | 0 | 0 | 0 | 0 | 6 (12.5%) | 0 |

| Real Hypergiants | 0 | 0 | 0 | 0 | 0 | 8 (16.7%) |

As we can see, the model correctly predicts 48 instances (100%). Therefore, there are no misclassified cases.

This shows that our predictive model has excellent accuracy.

7. Model deployment

The neural network is now ready to predict outputs for inputs it has never seen. This process is called model deployment.

To classify any given star, we calculate the neural network outputs from the different variables: temperature, luminosity, relative radius, absolute magnitude, color, and spectral class.

For example, if we introduce the following values for each input:

- Temperature: 10497.5 K.

- L (L/L_0): 107188.

- R (R/R_0): 237.2.

- A_M: 4.4.

- Color: Red.

- Spectral_Class: M.

The model predicts that the star belongs to each category with these probabilities.

- Probability of Red Dwarf: 1.3 %.

- Probability of Brown Dwarf: 81.1 %.

- Probability of White Dwarf: 0.2 %.

- Probability of Main Sequence: 7.3 %.

- Probability of Supergiants: 8.2 %.

- Probability of Hypergiants: 1.9 %.

The neural network would classify the star as a Brown Dwarf in this case, since it has the highest probability.

Conclusions

In conclusion, we have built a predictive model from which to determine the category of a star.

References

- Kaggle. Star dataset to predict star types.